Marine Heatwave Detection¶

Marine heatwaves are periods of persistent anomalously warm ocean temperatures, which can have significant impacts on marine life as well as coastal communities and economies. To detect the warm ocean water, sea surface temperature (SST) is usually used to define if there is any marine heatwave event. The following example is following the paper Jacox et al., 2022

Overview¶

In this page/notebook, we will be go throught the following steps

Extract the data from the PSL OPeNDAP server

Calculate the SST climatology

Calculate the SST anomaly

Determine the SST threshold based on the anomaly

Identify the marine heatwaves based on threshold

Prerequisites¶

To better understand and follow the steps in the notebook, it will be helpful for user to go through

| Concepts | Importance | Notes |

|---|---|---|

| Xarray | Helpful | Chunking and OPeNDAP access |

Time to learn: 15 minutes.

System requirements:

python

Xarray

pydap (not imported but will be used in the Xarray backend)

matplotlib (not imported but will be used in the Xarray plotting)

Numpy

plotly (only for the final interactive plot)

Imports¶

# import the needed packages

import warnings

import xarray as xr

import numpy as np

import plotly.express as pxwarnings.simplefilter("ignore")warnings.simplefilter

This line of code is not affecting the execution but just removing some of the warning output that might clutter your notebook. However, do pay attention to some of the warnings since they will indicate some deprecation of function or arg/kwarg in future update.Extract the data from an OPeNDAP server¶

In this page/notebook, we demonstrate how to use the NOAA OISST v2 High-resolution dataset to detect marine heatwaves. The dataset is currently hosted by NOAA Physical Sciences Laboratory.

Info

To explore more gridded datasets that are hosted at NOAA PSL, here is a useful search toolopendap_mon_url = "https://psl.noaa.gov/thredds/dodsC/Datasets/noaa.oisst.v2.highres/sst.mon.mean.nc"Xarray getting remote data¶

Xarray has a great support on accessing data in the cloud.

It has been continue to expend its capability and functionality with the community discussion like this.

Here we use the xr.open_dataset method with the keyword argument (engine='pydap') to use the pydap package in the backend to access the OPeNDAP server.

ds_mon = xr.open_dataset(opendap_mon_url, engine='pydap', chunks={'time':12,'lon':-1,'lat':-1})Lazy Loading

We can load the data lazily (only loading the metadata and coordinates information) and peek at the data's dimension and availability on our local machine. The actual data (SST values at each grid point in this case) will only be downloaded from the PSL server when further data manipulation (subsetting and aggregation like calculating mean) is needed. The only thing user needs to do to activate this function is to read the netCDF file using the `xr.open_dataset()` method with the keyword argument `chunks={'time':12,'lon':-1,'lat':-1}` provided. The chunk reading approach provide the opportunity to reduce the memory usage on the local machine during the calculation, the possibility of parallelizing the processes, and side-stepping the data download limit set by the OPeNDAP server (PSL server has a 500MB limit). The dataset is loaded lazily (only metadata and coordinates) shown below.In our example, we set the size of each chunk to be 12(time)x1440(lon)x720(lat) (when setting the chunk size = -1, it will use the length of the dimension as the chunksize) which is equal to 47.46 MB of data while the entire dataset is 1.39 GB. This allows us to get data in 47.46 MB chunk per download request.

The dataset is loaded lazily (only metadata and coordinates) shown below.

ds_monCalculate the SST climatology¶

First, we need to define the period that we are going to use to calculate the climatology. Here, we picked the 2019-2020 period to calculate the climatology.

Climatology

For a more accurate and scientifically valid estimate of marine heatwaves, one should usually consider a climatology period of at least 30 years. Here we set the climatology period from 2019 to 2020 (2 years) to speed up the processing time and for demonstration only. The shorter period (less memory consumption) also makes the interactive notebook launch on this page available for the user to manipulate and play with the dataset.climo_start_yr = 2019 # determine the climatology/linear trend start year

climo_end_yr = 2020 # determine the climatology/linear trend end year

ds_mon_crop = ds_mon.where((ds_mon['time.year']>=climo_start_yr)&

(ds_mon['time.year']<=climo_end_yr),drop=True)ds_mon_cropTo calculate the SST monthly climatology, we utilize the groupby method from Xarray.

ds_mon_climo = ds_mon_crop.groupby('time.month').mean()Calculate the SST anomaly¶

After the climatology is determined, we subtract the climatology from the original data to get the anomaly.

ds_mon_anom = (ds_mon_crop.groupby('time.month')-ds_mon_climo).compute()---------------------------------------------------------------------------

ProtocolError Traceback (most recent call last)

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/requests/models.py:822, in Response.iter_content.<locals>.generate()

821 try:

--> 822 yield from self.raw.stream(chunk_size, decode_content=True)

823 except ProtocolError as e:

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/urllib3/response.py:1250, in HTTPResponse.stream(self, amt, decode_content)

1249 if self.chunked and self.supports_chunked_reads():

-> 1250 yield from self.read_chunked(amt, decode_content=decode_content)

1251 else:

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/urllib3/response.py:1418, in HTTPResponse.read_chunked(self, amt, decode_content)

1417 else:

-> 1418 self._update_chunk_length()

1419 if self.chunk_left == 0:

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/urllib3/response.py:1344, in HTTPResponse._update_chunk_length(self)

1342 else:

1343 # Truncated at start of next chunk

-> 1344 raise ProtocolError("Response ended prematurely") from None

ProtocolError: Response ended prematurely

During handling of the above exception, another exception occurred:

ChunkedEncodingError Traceback (most recent call last)

Cell In[9], line 1

----> 1 ds_mon_anom = (ds_mon_crop.groupby('time.month')-ds_mon_climo).compute()

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/xarray/core/dataset.py:803, in Dataset.compute(self, **kwargs)

799 DataArray.compute

800 Variable.compute

801 """

802 new = self.copy(deep=False)

--> 803 return new.load(**kwargs)

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/xarray/core/dataset.py:569, in Dataset.load(self, **kwargs)

565 if chunked_data:

566 chunkmanager = get_chunked_array_type(*chunked_data.values())

567

568 # evaluate all the chunked arrays simultaneously

--> 569 evaluated_data: tuple[np.ndarray[Any, Any], ...] = chunkmanager.compute(

570 *chunked_data.values(), **kwargs

571 )

572

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/xarray/namedarray/daskmanager.py:85, in DaskManager.compute(self, *data, **kwargs)

80 def compute(

81 self, *data: Any, **kwargs: Any

82 ) -> tuple[np.ndarray[Any, _DType_co], ...]:

83 from dask.array import compute

---> 85 return compute(*data, **kwargs)

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/dask/base.py:685, in compute(traverse, optimize_graph, scheduler, get, *args, **kwargs)

682 expr = expr.optimize()

683 keys = list(flatten(expr.__dask_keys__()))

--> 685 results = schedule(expr, keys, **kwargs)

687 return repack(results)

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/xarray/core/indexing.py:686, in ImplicitToExplicitIndexingAdapter.__array__(self, dtype, copy)

682 def __array__(

683 self, dtype: DTypeLike | None = None, /, *, copy: bool | None = None

684 ) -> np.ndarray:

685 if Version(np.__version__) >= Version("2.0.0"):

--> 686 return np.asarray(self.get_duck_array(), dtype=dtype, copy=copy)

687 else:

688 return np.asarray(self.get_duck_array(), dtype=dtype)

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/xarray/core/indexing.py:691, in ImplicitToExplicitIndexingAdapter.get_duck_array(self)

690 def get_duck_array(self):

--> 691 return self.array.get_duck_array()

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/xarray/core/indexing.py:924, in CopyOnWriteArray.get_duck_array(self)

923 def get_duck_array(self):

--> 924 return self.array.get_duck_array()

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/xarray/coding/variables.py:71, in NativeEndiannessArray.get_duck_array(self)

70 def get_duck_array(self):

---> 71 return duck_array_ops.astype(self.array.get_duck_array(), dtype=self.dtype)

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/xarray/coding/common.py:80, in _ElementwiseFunctionArray.get_duck_array(self)

79 def get_duck_array(self):

---> 80 return self.func(self.array.get_duck_array())

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/xarray/core/indexing.py:764, in LazilyIndexedArray.get_duck_array(self)

761 from xarray.backends.common import BackendArray

763 if isinstance(self.array, BackendArray):

--> 764 array = self.array[self.key]

765 else:

766 array = apply_indexer(self.array, self.key)

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/xarray/backends/pydap_.py:51, in PydapArrayWrapper.__getitem__(self, key)

50 def __getitem__(self, key):

---> 51 return indexing.explicit_indexing_adapter(

52 key, self.shape, indexing.IndexingSupport.BASIC, self._getitem

53 )

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/xarray/core/indexing.py:1156, in explicit_indexing_adapter(key, shape, indexing_support, raw_indexing_method)

1134 """Support explicit indexing by delegating to a raw indexing method.

1135

1136 Outer and/or vectorized indexers are supported by indexing a second time

(...) 1153 Indexing result, in the form of a duck numpy-array.

1154 """

1155 raw_key, numpy_indices = decompose_indexer(key, shape, indexing_support)

-> 1156 result = raw_indexing_method(raw_key.tuple)

1157 if numpy_indices.tuple:

1158 # index the loaded duck array

1159 indexable = as_indexable(result)

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/xarray/backends/pydap_.py:56, in PydapArrayWrapper._getitem(self, key)

55 def _getitem(self, key):

---> 56 result = robust_getitem(self.array, key, catch=ValueError)

57 result = np.asarray(result.data)

58 axis = tuple(n for n, k in enumerate(key) if isinstance(k, integer_types))

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/xarray/backends/common.py:300, in robust_getitem(array, key, catch, max_retries, initial_delay)

298 for n in range(max_retries + 1):

299 try:

--> 300 return array[key]

301 except catch:

302 if n == max_retries:

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/pydap/model.py:1466, in GridType.__getitem__(self, key)

1464 else:

1465 if not self.output_grid:

-> 1466 return self.array[key]

1468 if not isinstance(key, tuple):

1469 key = (key,)

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/pydap/model.py:538, in BaseType.__getitem__(self, index)

535 return out

537 out = copy.copy(self)

--> 538 data = self._get_data_index(index)

540 # Check if index is a full slice (e.g., [:], ..., or tuple of all slice(None))

541 if (

542 index == slice(None)

543 or index == Ellipsis

544 or (isinstance(index, tuple) and all(i == slice(None) for i in index))

545 ):

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/pydap/model.py:587, in BaseType._get_data_index(self, index)

583 return np.vectorize(decode_np_strings, otypes=self._data.dtype.char)(

584 self._data[index]

585 )

586 else:

--> 587 return self._get_data()[index]

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/pydap/handlers/dap.py:490, in BaseProxyDap2.__getitem__(self, index)

480 logger.info("Fetching URL: %s" % url)

481 r = GET(

482 url,

483 self.application,

(...) 487 get_kwargs=self.get_kwargs,

488 )

--> 490 dds, data = safe_dds_and_data(r, self.user_charset)

492 # Parse received dataset:

493 dataset = dds_to_dataset(dds)

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/pydap/handlers/dap.py:419, in safe_dds_and_data(r, user_charset)

417 dds = _dds.decode(get_charset(r, user_charset))

418 elif isinstance(r, requests.Response):

--> 419 raw = r.content

420 _dds, data = raw.split(b"\nData:\n", 1)

421 dds = _dds.decode(user_charset)

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/requests/models.py:904, in Response.content(self)

902 self._content = None

903 else:

--> 904 self._content = b"".join(self.iter_content(CONTENT_CHUNK_SIZE)) or b""

906 self._content_consumed = True

907 # don't need to release the connection; that's been handled by urllib3

908 # since we exhausted the data.

File ~/micromamba/envs/marine-heatwave-cookbook-dev/lib/python3.14/site-packages/requests/models.py:824, in Response.iter_content.<locals>.generate()

822 yield from self.raw.stream(chunk_size, decode_content=True)

823 except ProtocolError as e:

--> 824 raise ChunkedEncodingError(e)

825 except DecodeError as e:

826 raise ContentDecodingError(e)

ChunkedEncodingError: Response ended prematurely.compute()

Notice the `.compute()` method in the code above. The data of SST is only loaded chunk-by-chunk, cropped to the desired period, averaged in the group of months, and finally subtracted the climatology from the original data when we execute the `.compute()` line. All these tasks are now executed in the background with a distributed server assigning tasks to different CPUs.ds_mon_anomDetermine the SST threshold based on the anomaly¶

Based on the Jacox et al., 2022, the threshold is determined based on a three month window with the center month being the monthly threhold one need to determined (e.g. January threshold is determined by all December, January, Feburary SST anomalies). Therefore, the function below is written to perform the three months window percentile operation.

########## Functions #########

# Function to calculate the 3 month rolling Quantile

def mj_3mon_quantile(da_data, mhw_threshold=90.):

da_data_quantile = xr.DataArray(coords={'lon':da_data.lon,

'lat':da_data.lat,

'month':np.arange(1,13)},

dims = ['month','lat','lon'])

for i in range(1,13):

if i == 1:

mon_range = [12,1,2]

elif i == 12 :

mon_range = [11,12,1]

else:

mon_range = [i-1,i,i+1]

da_data_quantile[i-1,:,:] = (da_data

.where((da_data['time.month'] == mon_range[0])|

(da_data['time.month'] == mon_range[1])|

(da_data['time.month'] == mon_range[2]),drop=True)

.quantile(mhw_threshold*0.01, dim = 'time', skipna = True))

return da_data_quantile

%time da_mon_quantile = mj_3mon_quantile(ds_mon_anom.sst, mhw_threshold=90)Tip

The `%time` command is jupyter cell magic to time the one-liner cell operation. It provides a great way to find the bottleneck of your data processing steps.The determined threshold value of each grid of each month is shown below

da_mon_quantile.isel(month=0).plot(vmin=0,vmax=3)Identify the marine heatwaves based on threshold¶

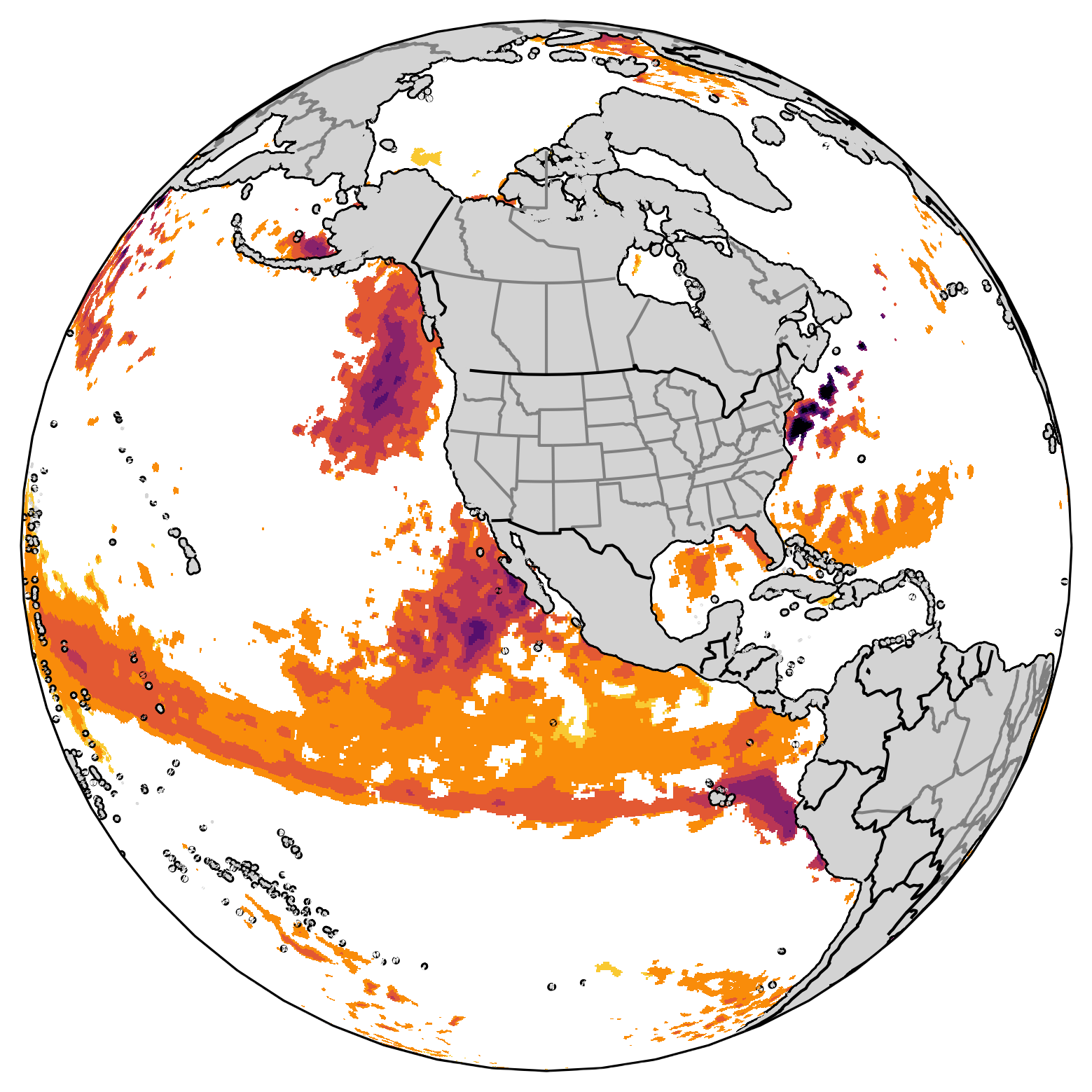

The figure below shows the original SST anomaly value for the first month.

ds_mon_anom.sst.isel(time=0).plot(vmin=0,vmax=3)To identify the marine heatwaves based on the monthly threshold, we use the where method to find the monthly marine heatwaves with the grid that has SST anomaly below the threshold to be masked as Not-a-Number.

da_mhw = ds_mon_anom.sst.where(ds_mon_anom.sst.groupby('time.month')>da_mon_quantile)The figure below shows the SST anomalous values that are above the monthly thresholds for the first months.

da_mhw.isel(time=0).plot(vmin=0,vmax=3)Interactive plot¶

The interactive plot is a great tool for looking at a local changes through zoom in.

Plotly provides a great interface for the user to also pin point the actual value at the point where they are interested in.

The only thing that need further data manipulation for using the plotly tool is to convert the Xarray DataArray to Pandas DataFrame.

However, this can be easily achieved throught the method .to_dataframe() provided by the Xarray package.

dff = (da_mhw.isel(time=0)

.to_dataframe()

.reset_index()

.dropna()

)

dff = dff.rename(columns={'sst':'MHW magnitude'})Plotly setting¶

After the DataFrame is created the plotly map can be created. Here, we are only using some simple options. More detail setups and options can be find on the Plotly documentation

# Setup the scatter mapbox detail

center = {'lat':38,'lon':-94} # center of the map

zoom = 2 # zoom level of the map

marker_size = 8 # marker size used on the map

mapbox_style = 'carto-positron' # mapbox options

fig = px.scatter_mapbox(dff,

lon = 'lon',

lat = 'lat',

color = 'MHW magnitude',

color_continuous_scale = 'orrd'

)

fig.update_layout(

mapbox={

'style': mapbox_style,

'center': center,

'zoom': zoom,

}

)

# Update the marker size using update_traces

fig.update_traces(marker=dict(size=marker_size))

Summary¶

Through this example, we demostrate how to lazily loaded a real world SST data from a OPeNDAP server and calculate the threshold that help us define the marine heatwave. By using the threshold, we can find the marine heatwave in each month.

What’s next?¶

A more interactive figures to view the marine heatwave will be added.

- Jacox, M. G., Alexander, M. A., Amaya, D., Becker, E., Bograd, S. J., Brodie, S., Hazen, E. L., Pozo Buil, M., & Tommasi, D. (2022). Global seasonal forecasts of marine heatwaves. Nature, 604(7906), 486–490. 10.1038/s41586-022-04573-9