Generating virutal datasets from GeoTiff files

Overview¶

In this tutorial we will cover:

How to generate virtual datasets from GeoTIFFs.

Combining virtual datasets.

Prerequisites¶

| Concepts | Importance | Notes |

|---|---|---|

| Basics of virtual Zarr stores | Required | Core |

| Multi-file virtual datasets with VirtualiZarr | Required | Core |

| Parallel virtual dataset creation with VirtualiZarr, Kerchunk, and Dask | Required | Core |

| Introduction to Xarray | Required | IO/Visualization |

Time to learn: 30 minutes

About the Dataset¶

The Finish Meterological Institute (FMI) Weather Radar Dataset is a collection of GeoTIFF files containing multiple radar specific variables, such as rainfall intensity, precipitation accumulation (in 1, 12 and 24 hour increments), radar reflectivity, radial velocity, rain classification and the cloud top height. It is available through the AWS public data portal and is updated frequently.

More details on this dataset can be found here.

import logging

from datetime import datetime

import dask

import fsspec

import rioxarray

import s3fs

import xarray as xr

from distributed import Client

from virtualizarr import open_virtual_dataset---------------------------------------------------------------------------

ImportError Traceback (most recent call last)

Cell In[1], line 10

6 import rioxarray

7 import s3fs

8 import xarray as xr

9 from distributed import Client

---> 10 from virtualizarr import open_virtual_dataset

File ~/micromamba/envs/kerchunk-cookbook/lib/python3.14/site-packages/virtualizarr/__init__.py:3

1 from importlib.metadata import version as _version

----> 3 from virtualizarr.accessor import (

4 VirtualiZarrDatasetAccessor,

5 VirtualiZarrDataTreeAccessor,

6 )

7 from virtualizarr.xarray import (

8 open_virtual_dataset,

9 open_virtual_datatree,

10 open_virtual_mfdataset,

11 )

13 try:

File ~/micromamba/envs/kerchunk-cookbook/lib/python3.14/site-packages/virtualizarr/accessor.py:17

7 from typing import (

8 TYPE_CHECKING,

9 Callable,

(...) 12 overload,

13 )

15 import xarray as xr

---> 17 from virtualizarr.manifests import ManifestArray

18 from virtualizarr.types.kerchunk import KerchunkStoreRefs

19 from virtualizarr.writers.kerchunk import dataset_to_kerchunk_refs

File ~/micromamba/envs/kerchunk-cookbook/lib/python3.14/site-packages/virtualizarr/manifests/__init__.py:4

1 # Note: This directory is named "manifests" rather than "manifest".

2 # This is just to avoid conflicting with some type of file called manifest that .gitignore recommends ignoring.

----> 4 from virtualizarr.manifests.array import ManifestArray # type: ignore # noqa

5 from virtualizarr.manifests.group import ManifestGroup # type: ignore # noqa

6 from virtualizarr.manifests.manifest import ChunkEntry, ChunkManifest # type: ignore # noqa

File ~/micromamba/envs/kerchunk-cookbook/lib/python3.14/site-packages/virtualizarr/manifests/array.py:6

4 import numpy as np

5 import xarray as xr

----> 6 from zarr.core.metadata.v3 import ArrayV3Metadata, RegularChunkGrid

8 import virtualizarr.manifests.utils as utils

9 from virtualizarr.manifests.array_api import (

10 MANIFESTARRAY_HANDLED_ARRAY_FUNCTIONS,

11 _isnan,

12 )

ImportError: cannot import name 'RegularChunkGrid' from 'zarr.core.metadata.v3' (/home/runner/micromamba/envs/kerchunk-cookbook/lib/python3.14/site-packages/zarr/core/metadata/v3.py)Examining a Single GeoTIFF File¶

Before we use Kerchunk to create indices for multiple files, we can load a single GeoTiff file to examine it.

# URL pointing to a single GeoTIFF file

url = "s3://fmi-opendata-radar-geotiff/2023/07/01/FIN-ACRR-3067-1KM/202307010100_FIN-ACRR1H-3067-1KM.tif"

# Initialize a s3 filesystem

fs = s3fs.S3FileSystem(anon=True)

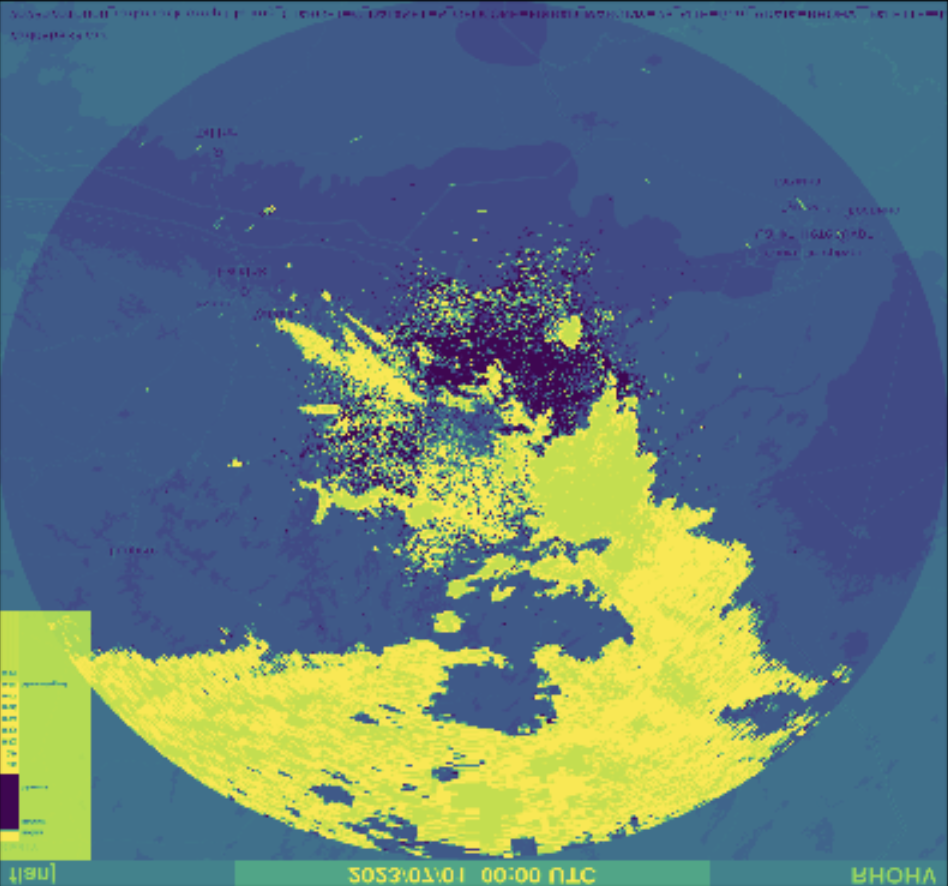

xds = rioxarray.open_rasterio(fs.open(url))xdsxds.isel(band=0).where(xds < 2000).plot()Create Input File List¶

Here we are using fsspec's glob functionality along with the * wildcard operator and some string slicing to grab a list of GeoTIFF files from a s3 fsspec filesystem.

# Initiate fsspec filesystems for reading

fs_read = fsspec.filesystem("s3", anon=True, skip_instance_cache=True)

files_paths = fs_read.glob(

"s3://fmi-opendata-radar-geotiff/2023/01/01/FIN-ACRR-3067-1KM/*24H-3067-1KM.tif"

)

# Here we prepend the prefix 's3://', which points to AWS.

files_paths = sorted(["s3://" + f for f in files_paths])Start a Dask Client¶

To parallelize the creation of our reference files, we will use Dask. For a detailed guide on how to use Dask and Kerchunk, see the Foundations notebook: Kerchunk and Dask.

client = Client(n_workers=8, silence_logs=logging.ERROR)

clientdef generate_virtual_dataset(file):

storage_options = dict(

anon=True, default_fill_cache=False, default_cache_type="none"

)

vds = open_virtual_dataset(

file,

indexes={},

filetype="tiff",

reader_options={

"remote_options": {"anon": True},

"storage_options": storage_options,

},

)

# Pre-process virtual datasets to extract time step information from the filename

subst = file.split("/")[-1].split(".json")[0].split("_")[0]

time_val = datetime.strptime(subst, "%Y%m%d%H%M")

vds = vds.expand_dims(dim={"time": [time_val]})

# Only include the raw data, not the overviews

vds = vds[["0"]]

return vds# Generate Dask Delayed objects

tasks = [dask.delayed(generate_virtual_dataset)(file) for file in files_paths]# Start parallel processing

import warnings

warnings.filterwarnings("ignore")

virtual_datasets = dask.compute(*tasks)Combine virtual datasets¶

combined_vds = xr.concat(virtual_datasets, dim="time")

combined_vdsShut down the Dask cluster¶

client.shutdown()