NetCDF

Generating virtual datasets from NetCDF files

Overview

Within this notebook, we will cover:

How to access remote NetCDF data using

VirtualiZarrandKerchunkCombining multiple virtual datasets

This notebook shares many similarities with the multi-file virtual datasets with VirtualiZarr notebook. If you are confused on the function of a block of code, please refer there for a more detailed breakdown of what each line is doing.

Prerequisites

Concepts |

Importance |

Notes |

|---|---|---|

Required |

Core |

|

Required |

Core |

|

Parallel virtual dataset creation with VirtualiZarr, Kerchunk, and Dask |

Required |

Core |

Required |

IO/Visualization |

Time to learn: 45 minutes

Motivation

NetCDF4/HDF5 is one of the most universally adopted file formats in earth sciences, with support of much of the community as well as scientific agencies, data centers and university labs. A huge amount of legacy data has been generated in this format. Fortunately, using VirtualiZarr and Kerchunk, we can read these datasets as if they were an Analysis-Read Cloud-Optimized (ARCO) format such as Zarr.

About the Dataset

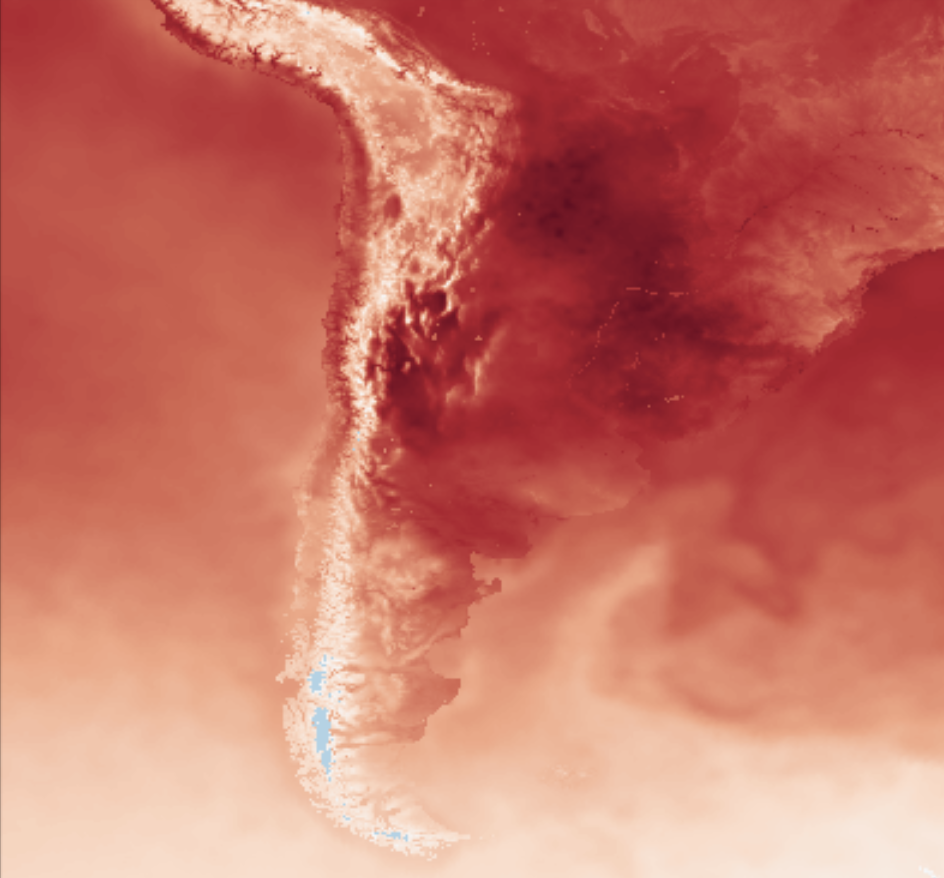

For this example, we will look at a weather dataset composed of multiple NetCDF files.The SMN-Arg is a WRF deterministic weather forecasting dataset created by the Servicio Meteorológico Nacional de Argentina that covers Argentina as well as many neighboring countries at a 4km spatial resolution.

The model is initialized twice daily at 00 & 12 UTC with hourly forecasts for variables such as temperature, relative humidity, precipitation, wind direction and magnitude etc. for multiple atmospheric levels. The data is output at hourly intervals with a maximum prediction lead time of 72 hours in NetCDF files.

More details on this dataset can be found here.

Flags

In the section below, set the subset flag to be True (default) or False depending if you want this notebook to process the full file list. If set to True, then a subset of the file list will be processed (Recommended)

subset_flag = True

Imports

import logging

import dask

import fsspec

import s3fs

import xarray as xr

from distributed import Client

from virtualizarr import open_virtual_dataset

Examining a Single NetCDF File

Before we use VirtualiZarr to create virtual datasets for multiple files, we can load a single NetCDF file to examine it.

# URL pointing to a single NetCDF file

url = "s3://smn-ar-wrf/DATA/WRF/DET/2022/12/31/00/WRFDETAR_01H_20221231_00_072.nc"

# Initialize a s3 filesystem

fs = s3fs.S3FileSystem(anon=True)

# Use Xarray to open a remote NetCDF file

ds = xr.open_dataset(fs.open(url), engine="h5netcdf")

Here we see the repr from the Xarray Dataset of a single NetCDF file. From examining the output, we can tell that the Dataset dimensions are ['time','y','x'], with time being only a single step.

Later, when we use Xarray's combine_nested functionality, we will need to know on which dimensions to concatenate across.

Create Input File List

Here we are using fsspec's glob functionality along with the * wildcard operator and some string slicing to grab a list of NetCDF files from a s3 fsspec filesystem.

# Initiate fsspec filesystems for reading

fs_read = fsspec.filesystem("s3", anon=True, skip_instance_cache=True)

files_paths = fs_read.glob("s3://smn-ar-wrf/DATA/WRF/DET/2022/12/31/12/*")

# Here we prepend the prefix 's3://', which points to AWS.

files_paths = sorted(["s3://" + f for f in files_paths])

# If the subset_flag == True (default), the list of input files will be subset

# to speed up the processing

if subset_flag:

files_paths = files_paths[0:8]

Start a Dask Client

To parallelize the creation of our reference files, we will use Dask. For a detailed guide on how to use Dask and Kerchunk, see the Foundations notebook: Kerchunk and Dask.

client = Client(n_workers=8, silence_logs=logging.ERROR)

client

Client

Client-de78eedc-b0ee-11ef-8d78-7c1e5222ecf8

| Connection method: Cluster object | Cluster type: distributed.LocalCluster |

| Dashboard: http://127.0.0.1:8787/status |

Cluster Info

LocalCluster

1f02a086

| Dashboard: http://127.0.0.1:8787/status | Workers: 8 |

| Total threads: 8 | Total memory: 15.61 GiB |

| Status: running | Using processes: True |

Scheduler Info

Scheduler

Scheduler-62adedb9-0f5f-4587-8afa-5920834013cd

| Comm: tcp://127.0.0.1:45749 | Workers: 8 |

| Dashboard: http://127.0.0.1:8787/status | Total threads: 8 |

| Started: Just now | Total memory: 15.61 GiB |

Workers

Worker: 0

| Comm: tcp://127.0.0.1:37221 | Total threads: 1 |

| Dashboard: http://127.0.0.1:45491/status | Memory: 1.95 GiB |

| Nanny: tcp://127.0.0.1:42817 | |

| Local directory: /tmp/dask-scratch-space/worker-_g_yvc1b | |

Worker: 1

| Comm: tcp://127.0.0.1:38229 | Total threads: 1 |

| Dashboard: http://127.0.0.1:40035/status | Memory: 1.95 GiB |

| Nanny: tcp://127.0.0.1:39549 | |

| Local directory: /tmp/dask-scratch-space/worker-qvjggl80 | |

Worker: 2

| Comm: tcp://127.0.0.1:45609 | Total threads: 1 |

| Dashboard: http://127.0.0.1:44975/status | Memory: 1.95 GiB |

| Nanny: tcp://127.0.0.1:44321 | |

| Local directory: /tmp/dask-scratch-space/worker-t68ihmyv | |

Worker: 3

| Comm: tcp://127.0.0.1:36381 | Total threads: 1 |

| Dashboard: http://127.0.0.1:42229/status | Memory: 1.95 GiB |

| Nanny: tcp://127.0.0.1:43355 | |

| Local directory: /tmp/dask-scratch-space/worker-2unbfx03 | |

Worker: 4

| Comm: tcp://127.0.0.1:39671 | Total threads: 1 |

| Dashboard: http://127.0.0.1:42029/status | Memory: 1.95 GiB |

| Nanny: tcp://127.0.0.1:38857 | |

| Local directory: /tmp/dask-scratch-space/worker-xjbgnll_ | |

Worker: 5

| Comm: tcp://127.0.0.1:41401 | Total threads: 1 |

| Dashboard: http://127.0.0.1:34911/status | Memory: 1.95 GiB |

| Nanny: tcp://127.0.0.1:46727 | |

| Local directory: /tmp/dask-scratch-space/worker-ztns536b | |

Worker: 6

| Comm: tcp://127.0.0.1:39107 | Total threads: 1 |

| Dashboard: http://127.0.0.1:39017/status | Memory: 1.95 GiB |

| Nanny: tcp://127.0.0.1:46689 | |

| Local directory: /tmp/dask-scratch-space/worker-41p5i590 | |

Worker: 7

| Comm: tcp://127.0.0.1:39013 | Total threads: 1 |

| Dashboard: http://127.0.0.1:38369/status | Memory: 1.95 GiB |

| Nanny: tcp://127.0.0.1:33899 | |

| Local directory: /tmp/dask-scratch-space/worker-of6xk0o0 | |

def generate_virtual_dataset(file, storage_options):

return open_virtual_dataset(

file, indexes={}, reader_options={"storage_options": storage_options}

)

storage_options = dict(anon=True, default_fill_cache=False, default_cache_type="none")

# Generate Dask Delayed objects

tasks = [

dask.delayed(generate_virtual_dataset)(file, storage_options)

for file in files_paths

]

# Start parallel processing

import warnings

warnings.filterwarnings("ignore")

virtual_datasets = list(dask.compute(*tasks))

Combine virtual datasets and write a Kerchunk reference JSON to store the virtual Zarr store

In the following cell, we are combining all the `virtual datasets that were generated above into a single reference file and writing that file to disk.

combined_vds = xr.combine_nested(

virtual_datasets, concat_dim=["time"], coords="minimal", compat="override"

)

combined_vds

<xarray.Dataset> Size: 280MB

Dimensions: (time: 8, y: 1249, x: 999)

Coordinates:

y (y) float32 5kB ManifestArray<shape=(1249,), dtype=flo...

time (time) int32 32B ManifestArray<shape=(8,), dtype=int32...

x (x) float32 4kB ManifestArray<shape=(999,), dtype=floa...

Data variables:

magViento10 (time, y, x) float32 40MB ManifestArray<shape=(8, 1249...

lat (time, y, x) float32 40MB ManifestArray<shape=(8, 1249...

T2 (time, y, x) float32 40MB ManifestArray<shape=(8, 1249...

HR2 (time, y, x) float32 40MB ManifestArray<shape=(8, 1249...

PP (time, y, x) float32 40MB ManifestArray<shape=(8, 1249...

dirViento10 (time, y, x) float32 40MB ManifestArray<shape=(8, 1249...

lon (time, y, x) float32 40MB ManifestArray<shape=(8, 1249...

Lambert_Conformal (time) float32 32B ManifestArray<shape=(8,), dtype=flo...combined_vds.virtualize.to_kerchunk("ARG_combined.json", format="json")

Shut down the Dask cluster

client.shutdown()