Kerchunk and Pangeo-Forge

Overview

In this tutorial we are going to use the open-source ETL pipeline named pangeo-forge-recipes to generate Kerchunk references.

Pangeo-Forge is a community project to build reproducible cloud-native ARCO (Analysis-Ready-Cloud-Optimized) datasets. The Python library (pangeo-forge-recipes) is the ETL pipeline to process these datasets or “recipes”. While a majority of the recipes convert a legacy format such as NetCDF to Zarr stores, pangeo-forge-recipes can also use Kerchunk under the hood to create reference recipes.

It is important to note that Kerchunk can be used independently of pangeo-forge-recipes and in this example, pangeo-forge-recipes is acting as the runner for Kerchunk.

Prerequisites

Concepts |

Importance |

Notes |

|---|---|---|

Required |

Core |

|

Required |

Core |

|

Required |

IO/Visualization |

Time to learn: 45 minutes

Getting to Know The Data

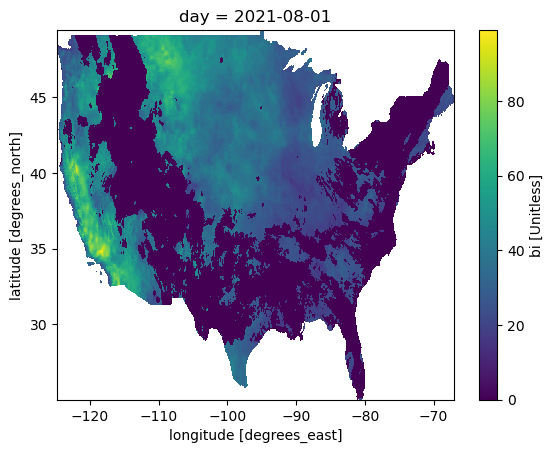

gridMET is a high-resolution daily meteorological dataset covering CONUS from 1979-2023. It is produced by the Climatology Lab at UC Merced. In this example, we are going to look create a virtual Zarr dataset of a derived variable, Burn Index.

Examine a Single File

import xarray as xr

ds = xr.open_dataset(

"http://thredds.northwestknowledge.net:8080/thredds/dodsC/MET/bi/bi_2021.nc"

)

Plot the Dataset

ds.sel(day="2021-08-01").burning_index_g.plot()

<matplotlib.collections.QuadMesh at 0x7f9ac9933af0>

Create a File Pattern

To build our pangeo-forge pipeline, we need to create a FilePattern object, which is composed of all of our input urls. This dataset ranges from 1979 through 2023 and is composed of one year per file.

To speed up our example, we will prune our recipe to select the first two entries in the FilePattern

from pangeo_forge_recipes.patterns import ConcatDim, FilePattern

years = list(range(1979, 2022 + 1))

time_dim = ConcatDim("time", keys=years)

def format_function(time):

return f"http://www.northwestknowledge.net/metdata/data/bi_{time}.nc"

pattern = FilePattern(format_function, time_dim, file_type="netcdf4")

pattern = pattern.prune()

pattern

<FilePattern {'time': 2}>

Create a Location For Output

We write to local storage for this example, but the reference file could also be shared via cloud storage.

target_root = "references"

store_name = "Pangeo_Forge"

Build the Pangeo-Forge Beam Pipeline

Next, we will chain together a bunch of methods to create a Pangeo-Forge - Apache Beam pipeline.

Processing steps are chained together with the pipe operator (|). Once the pipeline is built, it can be ran in the following cell.

The steps are as follows:

Creates a starting collection of our input file patterns.

Passes those file_patterns to

OpenWithKerchunk, which creates references of each file.Combines the references files into a single reference file and write them with

WriteCombineReferences

Just like Kerchunk, you can specify the reference file type as either .json or .parquet.

Note: You can add additional processing steps in this pipeline.

import apache_beam as beam

from pangeo_forge_recipes.transforms import OpenWithKerchunk, WriteCombinedReference

transforms = (

# Create a beam PCollection from our input file pattern

beam.Create(pattern.items())

# Open with Kerchunk and create references for each file

| OpenWithKerchunk(file_type=pattern.file_type)

# Use Kerchunk's `MultiZarrToZarr` functionality to combine and

# then write references. Note: Setting the correct contact_dims

# and identical_dims is important.

| WriteCombinedReference(

target_root=target_root,

store_name=store_name,

output_file_name="reference.json",

concat_dims=["day"],

identical_dims=["lat", "lon", "crs"],

)

)

%%time

with beam.Pipeline() as p:

p | transforms

ERROR:apache_beam.runners.common:No dimension found with name day [while running '[6]: Create|OpenWithKerchunk|WriteCombinedReference/WriteCombinedReference/CombineReferences/Get just the positions']

Traceback (most recent call last):

File "apache_beam/runners/common.py", line 1435, in apache_beam.runners.common.DoFnRunner.process

File "apache_beam/runners/common.py", line 637, in apache_beam.runners.common.SimpleInvoker.invoke_process

File "/home/runner/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/transforms/core.py", line 2040, in <lambda>

wrapper = lambda x: [fn(*x)]

File "/home/runner/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/pangeo_forge_recipes/transforms.py", line 525, in <lambda>

>> beam.MapTuple(lambda k, v: k.find_position(self.sort_dimension))

File "/home/runner/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/pangeo_forge_recipes/types.py", line 81, in find_position

raise ValueError(f"No dimension found with name {dim_name}")

ValueError: No dimension found with name day

---------------------------------------------------------------------------

ValueError Traceback (most recent call last)

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:1435, in apache_beam.runners.common.DoFnRunner.process()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:637, in apache_beam.runners.common.SimpleInvoker.invoke_process()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/transforms/core.py:2040, in MapTuple.<locals>.<lambda>(x)

2039 else:

-> 2040 wrapper = lambda x: [fn(*x)]

2042 # Proxy the type-hint information from the original function to this new

2043 # wrapped function.

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/pangeo_forge_recipes/transforms.py:525, in CombineReferences.expand.<locals>.<lambda>(k, v)

521 def expand(self, reference_lists: beam.PCollection) -> beam.PCollection:

522 min_max_count_positions = (

523 reference_lists

524 | "Get just the positions"

--> 525 >> beam.MapTuple(lambda k, v: k.find_position(self.sort_dimension))

526 | "Get minimum/maximum positions" >> beam.CombineGlobally(MinMaxCountCombineFn())

527 )

528 return (

529 reference_lists

530 | "Handle special case of gribs" >> beam.Map(self.handle_gribs)

(...)

540 | "Global reduce" >> beam.MapTuple(lambda k, refs: self.global_combine_refs(refs))

541 )

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/pangeo_forge_recipes/types.py:81, in Index.find_position(self, dim_name)

80 else:

---> 81 raise ValueError(f"No dimension found with name {dim_name}")

ValueError: No dimension found with name day

During handling of the above exception, another exception occurred:

ValueError Traceback (most recent call last)

File <timed exec>:1

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/pipeline.py:612, in Pipeline.__exit__(self, exc_type, exc_val, exc_tb)

610 try:

611 if not exc_type:

--> 612 self.result = self.run()

613 self.result.wait_until_finish()

614 finally:

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/pipeline.py:586, in Pipeline.run(self, test_runner_api)

584 finally:

585 shutil.rmtree(tmpdir)

--> 586 return self.runner.run_pipeline(self, self._options)

587 finally:

588 if not is_in_ipython():

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/direct/direct_runner.py:128, in SwitchingDirectRunner.run_pipeline(self, pipeline, options)

125 else:

126 runner = BundleBasedDirectRunner()

--> 128 return runner.run_pipeline(pipeline, options)

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/portability/fn_api_runner/fn_runner.py:203, in FnApiRunner.run_pipeline(self, pipeline, options)

192 _LOGGER.warning(

193 'If direct_num_workers is not equal to 1, direct_running_mode '

194 'should be `multi_processing` or `multi_threading` instead of '

(...)

197 self._num_workers,

198 running_mode)

200 self._profiler_factory = Profile.factory_from_options(

201 options.view_as(pipeline_options.ProfilingOptions))

--> 203 self._latest_run_result = self.run_via_runner_api(

204 pipeline.to_runner_api(default_environment=self._default_environment),

205 options)

206 return self._latest_run_result

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/portability/fn_api_runner/fn_runner.py:226, in FnApiRunner.run_via_runner_api(self, pipeline_proto, options)

224 pipeline_proto = self.resolve_any_environments(pipeline_proto)

225 stage_context, stages = self.create_stages(pipeline_proto)

--> 226 return self.run_stages(stage_context, stages)

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/portability/fn_api_runner/fn_runner.py:481, in FnApiRunner.run_stages(self, stage_context, stages)

478 assert consuming_stage_name == bundle_context_manager.stage.name

480 bundle_counter += 1

--> 481 bundle_results = self._execute_bundle(

482 runner_execution_context, bundle_context_manager, bundle_input)

484 if consuming_stage_name in monitoring_infos_by_stage:

485 monitoring_infos_by_stage[

486 consuming_stage_name] = consolidate_monitoring_infos(

487 itertools.chain(

488 bundle_results.process_bundle.monitoring_infos,

489 monitoring_infos_by_stage[consuming_stage_name]))

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/portability/fn_api_runner/fn_runner.py:809, in FnApiRunner._execute_bundle(self, runner_execution_context, bundle_context_manager, bundle_input)

804 # We create the bundle manager here, as it can be reused for bundles of

805 # the same stage, but it may have to be created by-bundle later on.

806 bundle_manager = self._get_bundle_manager(bundle_context_manager)

808 last_result, deferred_inputs, newly_set_timers, watermark_updates = (

--> 809 self._run_bundle(

810 runner_execution_context,

811 bundle_context_manager,

812 bundle_input,

813 bundle_context_manager.stage_data_outputs,

814 bundle_context_manager.stage_timer_outputs,

815 bundle_manager))

817 for pc_name, watermark in watermark_updates.items():

818 _BUNDLE_LOGGER.debug('Update: %s %s', pc_name, watermark)

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/portability/fn_api_runner/fn_runner.py:1046, in FnApiRunner._run_bundle(self, runner_execution_context, bundle_context_manager, bundle_input, data_output, expected_timer_output, bundle_manager)

1037 input_timers = bundle_input.timers

1038 self._run_bundle_multiple_times_for_testing(

1039 runner_execution_context,

1040 bundle_manager,

(...)

1043 input_timers,

1044 expected_timer_output)

-> 1046 result, splits = bundle_manager.process_bundle(

1047 data_input, data_output, input_timers, expected_timer_output)

1048 # Now we collect all the deferred inputs remaining from bundle execution.

1049 # Deferred inputs can be:

1050 # - timers

1051 # - SDK-initiated deferred applications of root elements

1052 # - Runner-initiated deferred applications of root elements

1053 deferred_inputs = {} # type: Dict[str, execution.PartitionableBuffer]

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/portability/fn_api_runner/fn_runner.py:1382, in BundleManager.process_bundle(self, inputs, expected_outputs, fired_timers, expected_output_timers, dry_run)

1375 # Actually start the bundle.

1376 process_bundle_req = beam_fn_api_pb2.InstructionRequest(

1377 instruction_id=process_bundle_id,

1378 process_bundle=beam_fn_api_pb2.ProcessBundleRequest(

1379 process_bundle_descriptor_id=self.bundle_context_manager.

1380 process_bundle_descriptor.id,

1381 cache_tokens=[next(self._cache_token_generator)]))

-> 1382 result_future = self._worker_handler.control_conn.push(process_bundle_req)

1384 split_results = [] # type: List[beam_fn_api_pb2.ProcessBundleSplitResponse]

1385 with ProgressRequester(self._worker_handler,

1386 process_bundle_id,

1387 self._progress_frequency):

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/portability/fn_api_runner/worker_handlers.py:384, in EmbeddedWorkerHandler.push(self, request)

382 self._uid_counter += 1

383 request.instruction_id = 'control_%s' % self._uid_counter

--> 384 response = self.worker.do_instruction(request)

385 return ControlFuture(request.instruction_id, response)

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/worker/sdk_worker.py:650, in SdkWorker.do_instruction(self, request)

647 request_type = request.WhichOneof('request')

648 if request_type:

649 # E.g. if register is set, this will call self.register(request.register))

--> 650 return getattr(self, request_type)(

651 getattr(request, request_type), request.instruction_id)

652 else:

653 raise NotImplementedError

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/worker/sdk_worker.py:688, in SdkWorker.process_bundle(self, request, instruction_id)

684 with bundle_processor.state_handler.process_instruction_id(

685 instruction_id, request.cache_tokens):

686 with self.maybe_profile(instruction_id):

687 delayed_applications, requests_finalization = (

--> 688 bundle_processor.process_bundle(instruction_id))

689 monitoring_infos = bundle_processor.monitoring_infos()

690 response = beam_fn_api_pb2.InstructionResponse(

691 instruction_id=instruction_id,

692 process_bundle=beam_fn_api_pb2.ProcessBundleResponse(

(...)

698 },

699 requires_finalization=requests_finalization))

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/worker/bundle_processor.py:1113, in BundleProcessor.process_bundle(self, instruction_id)

1110 self.ops[element.transform_id].process_timer(

1111 element.timer_family_id, timer_data)

1112 elif isinstance(element, beam_fn_api_pb2.Elements.Data):

-> 1113 input_op_by_transform_id[element.transform_id].process_encoded(

1114 element.data)

1116 # Finish all operations.

1117 for op in self.ops.values():

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/worker/bundle_processor.py:237, in DataInputOperation.process_encoded(self, encoded_windowed_values)

233 except Exception as exn:

234 raise ValueError(

235 "Error decoding input stream with coder " +

236 str(self.windowed_coder)) from exn

--> 237 self.output(decoded_value)

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/worker/operations.py:570, in apache_beam.runners.worker.operations.Operation.output()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/worker/operations.py:572, in apache_beam.runners.worker.operations.Operation.output()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/worker/operations.py:263, in apache_beam.runners.worker.operations.SingletonElementConsumerSet.receive()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/worker/operations.py:266, in apache_beam.runners.worker.operations.SingletonElementConsumerSet.receive()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/worker/operations.py:953, in apache_beam.runners.worker.operations.DoOperation.process()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/worker/operations.py:954, in apache_beam.runners.worker.operations.DoOperation.process()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:1437, in apache_beam.runners.common.DoFnRunner.process()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:1526, in apache_beam.runners.common.DoFnRunner._reraise_augmented()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:1435, in apache_beam.runners.common.DoFnRunner.process()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:636, in apache_beam.runners.common.SimpleInvoker.invoke_process()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:1621, in apache_beam.runners.common._OutputHandler.handle_process_outputs()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:1734, in apache_beam.runners.common._OutputHandler._write_value_to_tag()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/worker/operations.py:266, in apache_beam.runners.worker.operations.SingletonElementConsumerSet.receive()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/worker/operations.py:953, in apache_beam.runners.worker.operations.DoOperation.process()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/worker/operations.py:954, in apache_beam.runners.worker.operations.DoOperation.process()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:1437, in apache_beam.runners.common.DoFnRunner.process()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:1526, in apache_beam.runners.common.DoFnRunner._reraise_augmented()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:1435, in apache_beam.runners.common.DoFnRunner.process()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:636, in apache_beam.runners.common.SimpleInvoker.invoke_process()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:1621, in apache_beam.runners.common._OutputHandler.handle_process_outputs()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:1734, in apache_beam.runners.common._OutputHandler._write_value_to_tag()

[... skipping similar frames: apache_beam.runners.common.DoFnRunner._reraise_augmented at line 1526 (1 times), apache_beam.runners.common.DoFnRunner.process at line 1437 (1 times), apache_beam.runners.common.DoFnRunner.process at line 1435 (1 times), apache_beam.runners.common.SimpleInvoker.invoke_process at line 636 (1 times), apache_beam.runners.common._OutputHandler._write_value_to_tag at line 1734 (1 times), apache_beam.runners.common._OutputHandler.handle_process_outputs at line 1621 (1 times), apache_beam.runners.worker.operations.DoOperation.process at line 953 (1 times), apache_beam.runners.worker.operations.DoOperation.process at line 954 (1 times), apache_beam.runners.worker.operations.SingletonElementConsumerSet.receive at line 266 (1 times)]

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/worker/operations.py:266, in apache_beam.runners.worker.operations.SingletonElementConsumerSet.receive()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/worker/operations.py:953, in apache_beam.runners.worker.operations.DoOperation.process()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/worker/operations.py:954, in apache_beam.runners.worker.operations.DoOperation.process()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:1437, in apache_beam.runners.common.DoFnRunner.process()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:1526, in apache_beam.runners.common.DoFnRunner._reraise_augmented()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:1435, in apache_beam.runners.common.DoFnRunner.process()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:636, in apache_beam.runners.common.SimpleInvoker.invoke_process()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:1621, in apache_beam.runners.common._OutputHandler.handle_process_outputs()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:1734, in apache_beam.runners.common._OutputHandler._write_value_to_tag()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/worker/operations.py:352, in apache_beam.runners.worker.operations.GeneralPurposeConsumerSet.receive()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/worker/operations.py:951, in apache_beam.runners.worker.operations.DoOperation.process()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/worker/operations.py:953, in apache_beam.runners.worker.operations.DoOperation.process()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/worker/operations.py:954, in apache_beam.runners.worker.operations.DoOperation.process()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:1437, in apache_beam.runners.common.DoFnRunner.process()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:1547, in apache_beam.runners.common.DoFnRunner._reraise_augmented()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:1435, in apache_beam.runners.common.DoFnRunner.process()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/runners/common.py:637, in apache_beam.runners.common.SimpleInvoker.invoke_process()

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/apache_beam/transforms/core.py:2040, in MapTuple.<locals>.<lambda>(x)

2038 wrapper = lambda x, *args, **kwargs: [fn(*(tuple(x) + args), **kwargs)]

2039 else:

-> 2040 wrapper = lambda x: [fn(*x)]

2042 # Proxy the type-hint information from the original function to this new

2043 # wrapped function.

2044 type_hints = get_type_hints(fn).with_defaults(

2045 typehints.decorators.IOTypeHints.from_callable(fn))

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/pangeo_forge_recipes/transforms.py:525, in CombineReferences.expand.<locals>.<lambda>(k, v)

521 def expand(self, reference_lists: beam.PCollection) -> beam.PCollection:

522 min_max_count_positions = (

523 reference_lists

524 | "Get just the positions"

--> 525 >> beam.MapTuple(lambda k, v: k.find_position(self.sort_dimension))

526 | "Get minimum/maximum positions" >> beam.CombineGlobally(MinMaxCountCombineFn())

527 )

528 return (

529 reference_lists

530 | "Handle special case of gribs" >> beam.Map(self.handle_gribs)

(...)

540 | "Global reduce" >> beam.MapTuple(lambda k, refs: self.global_combine_refs(refs))

541 )

File ~/miniconda3/envs/kerchunk-cookbook/lib/python3.10/site-packages/pangeo_forge_recipes/types.py:81, in Index.find_position(self, dim_name)

79 return self[dimension].value

80 else:

---> 81 raise ValueError(f"No dimension found with name {dim_name}")

ValueError: No dimension found with name day [while running '[6]: Create|OpenWithKerchunk|WriteCombinedReference/WriteCombinedReference/CombineReferences/Get just the positions']